It would be nice to see the elo ratings of the opposing algo in order to get an idea of how good your algo is performing, like whether it beat a much higher-elo algo or if it lost to a lower-elo algo.

That’s something we are planning to do soon great suggestion!

I know it’s only been 6 days, but any idea what “soon” means? I’m looking forward to this being implemented!

Ha, did you guys just push this today? Nice! I can now see elo listed in global replays table under MyAlgos. Thanks!!

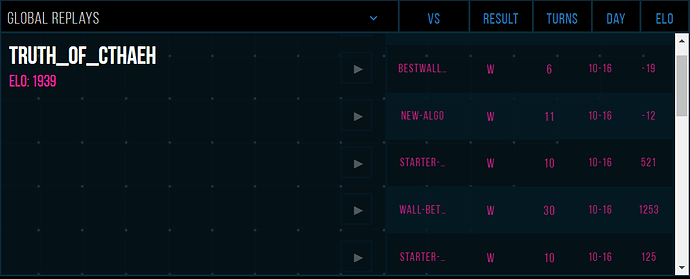

This feature is great, but I have a follow-up question. I have here a screenshot of the matches for one of my algos. Is this sort of matchmaking intended? I find it will be impossible to recover from losses with opponents like these.

P.S. also, negative ELO? Is that supposed to be possible?

I have also noticed matchups with really low-elo algos (i got 4 in a row below 100, with one being negative). All of them seem to be some variation of the starter algo strategy.

This seems to demonstrate that the current match-making system completely ignores ELO when selecting competitors, I’d love to see that changed!

To ask @KauffK’s question in another way …

Since it’s been said that wins against opponents more then 400 ELO away will not be of any value, can you change it so that we ONLY match against opponents within 400 ELO either way?

We can confirm that matchmaking has been matching people with significantly different Elos, which is not something we intended. The Elo system itself had a few issues which we have already patched which led to a large number of players having extremely low Elo scores. I fixed Elo last week and we deployed it recently, and I am now going to investigate matchmaking- something I wanted to do for some time.

Matchmaking is intended to match players of similar Elo first, but because Algos don’t change their strategies, and usually dont have enough randomness to lead to a significant difference in winrate between the same two algos rematching, we do not allow ranked rematches between algos. This leads to a host of problems, most prominently top algos having nobody left to play at their level after just a few dozen matches, and forcing them to play against low level players

The problems are as follows:

- Unfair to top players, who gain nothing on winning and lose Elo on losing

- Wastes games for low level players, makes it take longer for them to reach their correct Elo

- Wastes games/server time playing a game with a very high chance of not changing anyones Elo

- No Elo mobility at high levels

The issue is caused by a design flaw in the matchmaking system, rather than a bug. I am going to pitch the following changes to the team:

- Never match two algos in a match where the favored players Elo is too high to gain 1 point from winning

- Allow Elos to rematch after a certain amount of time xor after a significant change in Elo of one of the algos (a 25-50 point change maby) to ensure mobility.

I am going to do additional research into matchmaking and systems where rematches are not ideal as well. Let me know if anyone has ideas. Hopefully, we can come up with a new system within a few days and have it implemented, tested and deployed before the end of next week.

You could use a Game Ladder Ranking System, which is similar to Elo but includes a limit as to how high up the leaderboard a player (or algo in this case) can challenge. I’d argue that this would solve the second and third problem, although the last problem may still present itself. I also feel like the first problem would also be taken care of, as losing could only result in a potential drop as large as the challenge distance, while winning reduces the number of algos that could challenge you.

As for the problems mentioned in the Wikipedia article, these should be avoidable, as the challenge system could be made to prevent any one algo from challenging too frequently or too infrequently. Furthermore, while a game ladder ranking system lacks the numerical ranking of the Elo system, one could rank the algos in terms of height, with the highest algo being first place, the second highest second, and so on and so forth.

However, there do exist some downsides with using a game ladder ranking system. The most obvious one is that three or more algos could be locked in a rock-paper-scissors rotation, although as far as I know the Elo ranking system would also be susceptible to this, as long as rematches are occasionally held. Having to convert from the current Elo scores to a game ladder system could also pose a problem, although preserving the ordering of all of the algos might be the easiest and best way to implement it. Determining the sizes of the “rungs” of the game ladder might also be difficult, as making them too large leads to problems two and three, while making them too small leads to problem four.

Nonetheless, I feel like using a game ladder ranking system would be beneficial and offers several benefits not found in the current system, although adding in a cap as to how large the Elo difference can be between two algos in a match would essentially emulate this and, thus, also serve as an improvement to the current system.

I wont be responding to individual suggestions as having a discussion about each of them will be very time consuming. Any ideas that are proposed here like the proposal from @Grimm will be investigated by me and discussed internally by the team.

I try to keep our users informed on our priorities and what we are working on, so i’ll let you all know what direction we are headed when we make a decision.

Thanks @RegularRyan for the insight! I found this particularly interesting:

It makes complete sense why this decision was made, but I can see how it has stagnated the top players. Now, given what you say just a little further on:

I’m encouraged that there will be a solution in the works.

My first instinct is that 25-50 point change might not be enough to justify a rematch? Let’s say a hot, new algo hits the board at 1500 ELO.

Truth_of_Cthaeh sitting at 1950 ELO won’t see it until it wins a bit, but then suddenly has a new, eligible opponent and plays it early on. 1950 beating 1550 won’t result in much gain by Truth_of_Cthaeh for good reason. But assuming the new algo ends up settling in the top 20 with 1700+ elo, had Cthaeh battled it as 1950 -vs- 1700, it would have rightly gained more points for the win. Now given the deterministic behavior, is having Cthaeh gain ELO from playing against it at 1550, 1600, 1650, 1700 really the right solution? That seems like it would result in continuing to push the upper echelon further out of reach for newer algos.

I know one of your goals is to reward strong algos that have been around for a while, but when the stronger Truth_of_Cthaeh (2) finally gets uploaded, is the original (inferior) one too far out of reach for #2 to ever catch up?

Edited to add: I’ve honestly been impressed with your handling of things so far, so I trust the solution you come up with will be a great one. And I’m having a blast participating in this competition, so thanks for all your hard work and responsiveness!

Thanks n-sanders, I try my best! We will take these concerns into account during our matchmaking redesign/fix

Sorry my brain keeps spinning on this …

Maybe having an accumulated elo gain from a given match up would solve the repeat play problem?

If Truth_of_Cthaeh would get +10 elo for beating an algo at 1550, but it would have gotten +12 elo for beating that same algo at 1600, maybe rematches just accumulate elo?

- Truth_of_Cthaeh win vs MyAlgo at 1550 elo = +10 elo

- Truth_of_Cthaeh win vs MyAlgo at 1600 elo = +2 elo

- Truth_of_Cthaeh win vs MyAlgo at 1650 elo = +2 elo

- Truth_of_Cthaeh win vs MyAlgo at 1700 elo = +2 elo

This way Truth_of_Cthaeh is still allowed to get it’s “full” +16 elo had they waited to play until 1950 v 1700, but doesn’t get 52 total elo for beating the same algo 4 times in a row.

Hey everyone, an update on this.

We have identified 3 quick improvements we will be making very soon:

-

Algos will not play matches where it is possible to gain 0 Elo

– Self explanitory. These matches are bad for many reasons -

Matching logic adjustment

– Currently, we seek a match the ‘most stagnent’ algo who has not been matched in a while, which makes sense at first glance

– The problem is that top algos naturally play fewer matches for a number of reasons (Far from new, ‘bursted’ algos, fewer algos around their level)

– These algos will always look for ‘up and coming’ algos and fight them ASAP, and gain less Elo for beating these newcomers before the newcomers have a chance to gain Elo.

– This system is causing other biases in the system and make certain matches more likely than others

– For now, we will choose random algos instead of the most stagnent algo -

Bugfix

– There is an issue where matches are being made faster than they are being played, due to us making more matches than we were expecting. This is causes a few issues, like many algos being unavailable for matches.

– This is a major reason top algos are playing against ‘crashbots’ with literally negative Elo, all algos between them in Elo are scheduled for matched.

These changes will be made within the week, ideally. Larger changes will come later.

The largest problem I see with rematches (which has already been mentioned) is the deterministic nature of algos. A possible way to increase the event space would be to slightly modify the game. For example, the map could be slightly different each time like maybe a few tiles are unusable or certain tiles make units move slower/faster, etc. These would obviously be affecting both players (eg the same tiles would be blocked for both players) with the intent not being to drastically alter the game, but give just a slight difference in games so that a rematch gives new information on the quality of an algo.

I tried to think of something that would not affect the core game strategy much, but still change the outcomes (without making success just random).

@Isaac No, please not.

I agree that rematches are not really necessary, but modifying the game is something that seems totally over the top and I feel like it is not attacking any of the problems mentioned because this would just lead to a need of more games.

Think of it this way: Now, because most algos are deterministic, one match between every algo would be sufficient to accurately determine elo. If you modify the game, you would need to match every algo against each other for every variation of the game to have an accurate score.

Before: number_of_matches_needed_for_accurate_elo = number_of_other_algos

With your idea: matches_needed = other_algos * variations_of_game

This would lead to even longer times to get an accurate elo. We are already waiting days until an algo reaches the top algos, which will hopefully be improved with the improvements mentioned by @RegularRyan, but would be increased by your idea.

I also hope that algos below 1500 elo get to play less matches than those above because it is better for every user if they get feedback from their better algos, not to mention those that try to compete at the top.

Definitely not excluding them because matches like this occurrence are valuable experiences.

I agree that deterministic works well for this competition, but there is still less elo to gain if you play an algo while it is still “rising” to it’s stable place in the standings. If your algo is always going to beat my algo, then you want to play it when I’m ranked at my highest elo to maximize the elo you gain from it (since we already know the elo earned is dependent on the opponents elo at the time you play them).

That’s where rematches (assuming something like the “accumulated” elo gain is used) can be helpful for accurate elo in the end.

Great that you try to pick those low hanging fruits first. I have a question about your first point about matches where it is possible to gain 0 Elo. Are those where one (or both) player has a negative Elo?

@Janis

If you beat someone with much more Elo than you the formula says “One of these players is at the wrong level” So more points are exchanged. If you beat someone with less Elo than you the system says “Thats what I expected, their scores look correct” and makes a smaller adjustment. If you beat someone with a much lower score, I think its a 400 point difference, the adjustment becomes so small 0 points are exchanged